For about a decade, the answer to "prove you are who you say you are" on a banking app was: hold your phone up, turn your head, blink on command. The selfie-check was cheap, fast, and good enough to satisfy regulators. A darknet tool called JINKUSU CAM, flagged by threat intelligence researchers on April 5 and now being sold openly on Telegram channels, has quietly made that entire workflow a liability.

The tool is a real-time deepfake pipeline. It takes a stolen photo, maps a 3D face mesh onto it, swaps it onto the operator's head in real time, and pipes the result into a victim bank's app through a virtual camera. The operator blinks, smiles, tilts their head, reads a challenge phrase, and the app sees a convincing human doing exactly what it asked. Sellers advertise it as working against Binance, BBVA, Revolut, Coinbase, and Kraken, and security researchers so far have not been able to contradict the claims.

What JINKUSU CAM Actually Does

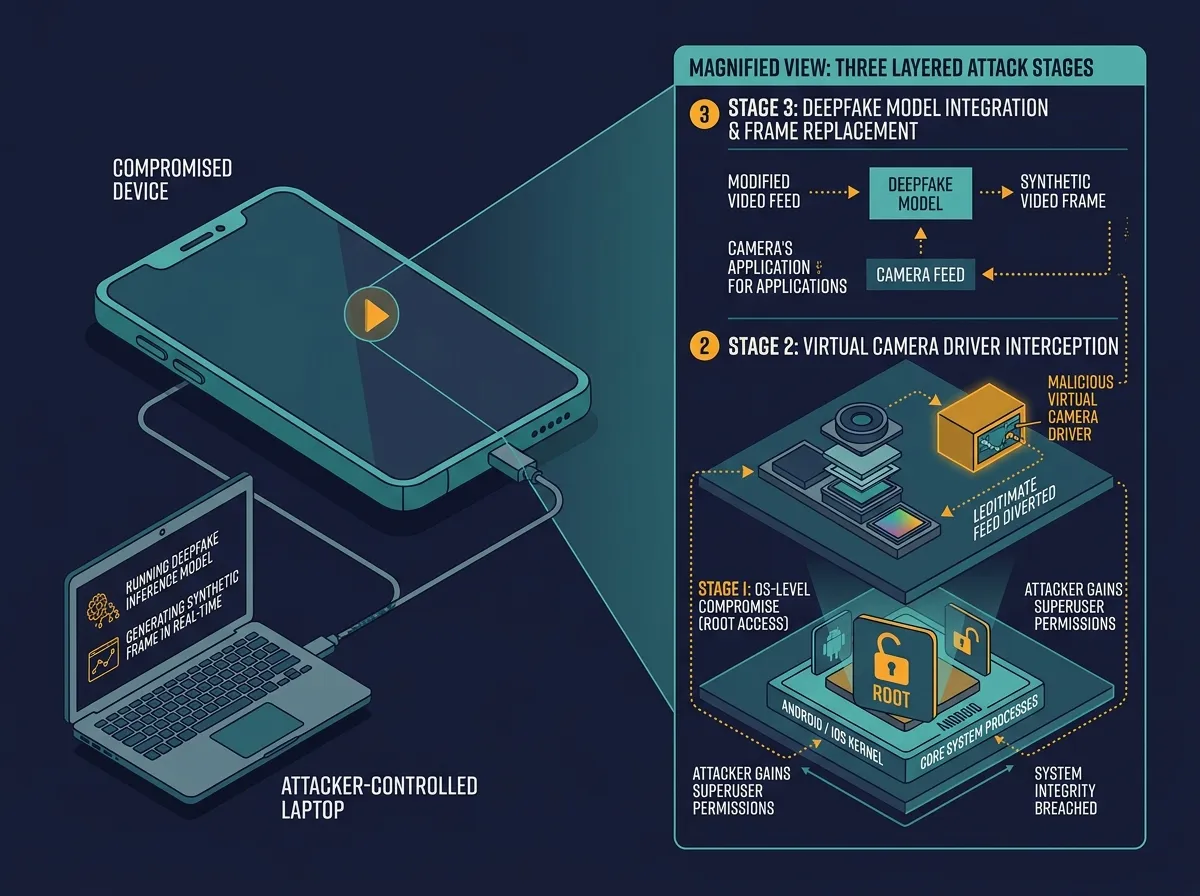

The toolkit is not one program but a bundle. At the core is a GPU-accelerated face-swap model built on the open-source InsightFace library, which has been used for years in legitimate research on facial recognition. JINKUSU CAM wraps it with three additions that matter: a facial-mesh tracker that smooths out the jawline artifacts live verification systems look for, a voice modulator with preset profiles that can match the deepfake face to a synthetic voice, and a virtual-camera driver that pipes the final output into any app expecting a real webcam or phone camera feed.

MIT Technology Review, which reviewed the tool's marketing materials alongside researchers at the identity-fraud firm Sumsub, reported on April 15 that operators can generate a working fake identity in under five minutes. The same researchers confirmed that hackers are also compromising the phones and the financial institutions' own apps before the fake video is fed in, so the app sees a "trusted device" with a convincing face. That combination is what makes the tool work: it is not just a better deepfake, it is a deepfake plus a compromised client environment.

The model behind the face swap is the same class of technology that powers benign research demos you have seen at conferences. What changed is the packaging. Anthony Brown, an identity-fraud analyst at Sumsub, told MIT Technology Review that the tools are "much more effective, because they are open source and cheap to run," and that their distribution on Telegram has turned what used to be a capability of a few skilled operators into a commodity.

Why the Selfie-Check Era Is Ending

The live selfie-check became the industry standard after 2017, when regulators started pushing banks and crypto exchanges to move beyond document-only KYC. The pitch was that a moving human face, captured in real time, was harder to fake than a photograph of an ID card. That assumption held up for about seven years. It does not hold up anymore.

The problem is structural. Live video verification works by asking a camera for a feed and analyzing what comes back. If the feed is a deepfake, the analysis is of the deepfake. Some vendors claim to detect liveness by looking for skin texture, micro-blood-flow patterns, or subtle eye reflections, but those signals can be modeled. JINKUSU CAM's facial-mesh tracker is specifically designed to produce the texture and motion patterns that liveness detectors are trained on. As long as the fake is rendered to satisfy the check, the check passes.

This is the same pattern that played out with voice authentication at call centers two years ago. Banks that had deployed voice biometrics as a password-replacement found, after the launch of consumer voice-cloning tools, that the whole premise had shifted. Many of them quietly moved voice back to a secondary factor. The selfie-check is now following the same arc, about twenty-four months behind.

The Cambodia and Myanmar Fraud Pipeline

JINKUSU CAM did not appear in a vacuum. Biometric Update, which tracks identity-fraud tooling, reported on April 12 that Telegram channels selling the tool and its competitors are being run mainly out of scam compounds in Cambodia and Myanmar. These compounds have been a known problem to the United Nations for years. A 2023 UN Office of the High Commissioner for Human Rights report estimated that at least 120,000 people in Myanmar and 100,000 in Cambodia were being held in conditions amounting to forced labor in cyber-scam operations, many of them trafficked under false pretenses.

The compounds used to specialize in romance scams and pig-butchering investment fraud. The shift to KYC bypass is a logical next step. Once an operator has a convincing stolen identity and a working deepfake, opening a bank account or crypto wallet in someone else's name unlocks the full laundering chain: pushing scam proceeds into the formal financial system and then out again. The OECD's AI incident database, which logged JINKUSU CAM on April 6, categorized the threat under "financial fraud and money laundering" rather than "identity theft" specifically for this reason.

The stolen inputs feeding the system are already plentiful. Years of data breaches have pushed hundreds of millions of scanned driver's licenses, passports, and selfies into criminal markets. The 2023 MOVEit breach alone exposed the personal information of more than 77 million people, including ID images uploaded for various government and corporate services. A tool like JINKUSU CAM is, in one sense, the final piece of infrastructure that makes all that stolen data directly useful.

What Banks and Exchanges Can Do Now

There is no silver bullet. The short-term response most large institutions are considering falls into three categories:

- Device attestation, using hardware-level signals from the phone itself (secure enclave, integrity checks) to confirm the camera feed actually originated from a real, uncompromised device rather than a virtual one.

- Out-of-band verification, adding a step that cannot be faked by a virtual camera: a government-backed digital ID, a video call with a trained human agent, or a physical branch visit for higher-risk accounts.

- Behavioral risk scoring, leaning harder on signals that survive deepfakes: transaction patterns, device history, IP reputation, and the timing of account activity after verification.

None of these are new. What is new is the pressure to actually deploy them. Binance, Coinbase, and Revolut have all publicly acknowledged the threat in the past two weeks, and Revolut confirmed on April 16 it is accelerating a previously planned rollout of hardware-based device checks. BBVA has not commented publicly. The broader challenge is regulatory: European AML rules were drafted assuming the live selfie was a strong signal, and rewriting them takes years.

There is also a harder conversation starting inside security teams. If the live face check is no longer defensible as a primary verification, what replaces it at scale? Government digital identity systems like India's Aadhaar or the EU's EUDI wallet are the most-cited candidates, but they are years away from global coverage. In the meantime, institutions will likely accept a higher rate of fraud on low-value accounts and reserve strong verification for accounts that can move meaningful money.

The Quiet End of a Trust Assumption

Every security system carries an implicit assumption about what it can trust. For ten years, banks and crypto exchanges have trusted that a camera feed was a camera feed. JINKUSU CAM has not broken any single product. It has broken that assumption, and the products built on top of it are now exposed all at once.

The interesting question is not whether this specific tool gets shut down. Telegram channels come and go, and the underlying models are open source. The interesting question is how fast the institutions that relied on live selfie verification can move to something that does not assume the camera is telling the truth. On that, the industry has a track record of moving only when losses force it to. Expect the losses first.

Sources

- Cyberscammers are bypassing banks' security with illicit tools sold on Telegram, MIT Technology Review

- New AI cybercrime tool targets crypto, bank KYC systems via deepfakes, Cointelegraph

- AI Deepfake Tools Bypass KYC, Fueling Financial Fraud in Crypto and Banking, OECD AI Incident Monitor

- KYC bypass tools sold on Telegram to defeat biometric checks, Biometric Update

- AI Fake IDs and the New KYC Risk, Sumsub