OpenAI just made its most aggressive move yet to capture the enterprise market. On February 5, the company unveiled Frontier, a platform designed to let businesses build, deploy, and manage AI agents that don't just answer questions but actually do work. Think less "chatbot" and more "digital employee," one that can navigate CRM systems, pull data from warehouses, and execute multi-step tasks while a human manager monitors its performance from a dashboard.

The launch came alongside GPT-5.3-Codex, the company's most capable coding model to date, which OpenAI says was partly built by earlier versions of itself. Together, the two announcements signal that OpenAI is done treating enterprise customers as an afterthought and is ready to compete directly with Salesforce, Microsoft, and a growing crowd of startups for the corporate AI dollar.

HP, Intuit, Oracle, State Farm, Thermo Fisher, and Uber are among the first companies to adopt Frontier, with dozens of existing customers including BBVA, Cisco, and T-Mobile already piloting the platform's approach to complex AI work. Sam Altman didn't mince words at the launch event: "This isn't just about faster chat. It is about giving enterprises the infrastructure to hire AI coworkers that can learn, adapt, and execute work safely alongside their human teams."

How Frontier Actually Works

Frontier's design philosophy borrows from human workforce management in ways that feel almost uncomfortably literal. The platform gives AI agents the same capabilities that new employees need to succeed: shared context about the organization, onboarding processes, hands-on learning with feedback loops, and clear permissions defining what they can and cannot access.

Building an agent starts in a chat interface that resembles ChatGPT. An administrator describes in natural language what tasks the agent should perform, and Frontier generates a working agent from that description. The agent can then connect to existing business systems, including CRM platforms, data warehouses, ticketing tools, and enterprise applications, without requiring companies to replatform their existing infrastructure.

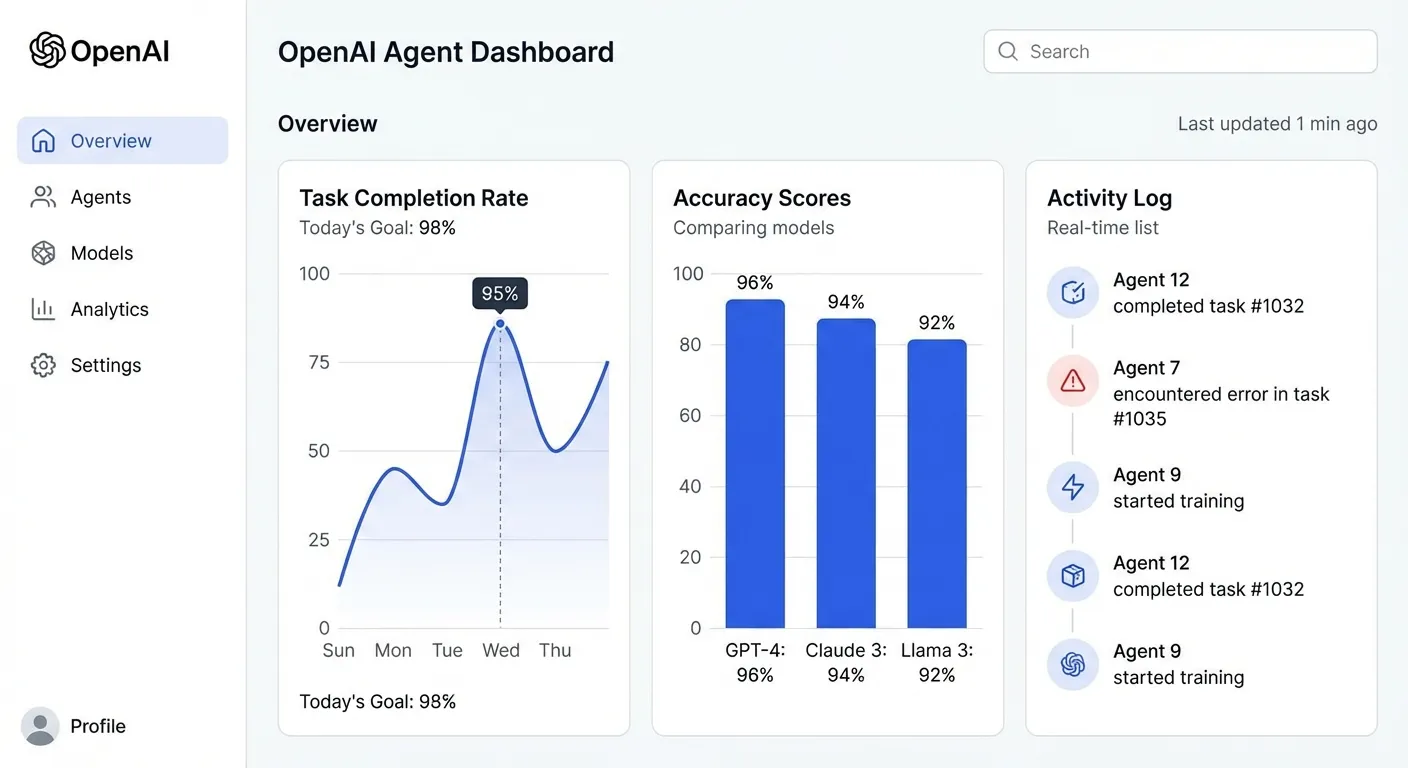

The monitoring capabilities are where Frontier differentiates itself from simpler agent frameworks. Administrators can watch agents work in real time through a dashboard that visualizes metrics including output accuracy, task completion rates, and even a "politeness" score for customer-facing interactions. Every action an agent takes is logged in a comprehensive audit trail, addressing one of the biggest concerns enterprise customers have about deploying autonomous AI: knowing exactly what it did, when, and why.

Critically, Frontier works with agents from other providers too. The platform supports agents built by Google, Microsoft, Anthropic, and third-party developers alongside OpenAI's own models. This interoperability play is strategic: rather than demanding companies go all-in on OpenAI, Frontier positions itself as the management layer that sits on top of whatever AI tools a company already uses.

The GPT-5.3-Codex Factor

The simultaneous release of GPT-5.3-Codex adds a powerful component to the Frontier story. This model unifies the frontier coding performance of GPT-5.2-Codex with the reasoning capabilities of GPT-5.2, delivering both in a package that runs 25% faster than its predecessor.

The headline detail: GPT-5.3-Codex helped build itself. The Codex team used early versions of the model to debug its own training process, manage deployment logistics, and diagnose test results. OpenAI acknowledges this is the first time one of its models was "instrumental in creating itself," a milestone that sounds like science fiction but reflects how capable these systems have become at software engineering tasks.

On SWE-Bench Pro, a rigorous evaluation of real-world software engineering ability, GPT-5.3-Codex achieves state-of-the-art performance. The model can handle long-running tasks that involve research, tool use, and complex execution chains. Users can steer and interact with it while it works without losing context, making it function more like a pair programmer than a code generator.

OpenAI flagged one notable safety consideration: GPT-5.3-Codex is the first model the company classifies as "High" capability in the cybersecurity domain under its Preparedness Framework. That classification triggers additional safeguards, acknowledging that a model this capable at writing and modifying code also carries risks if misused.

What This Means for Enterprise Software

Frontier's launch throws down a gauntlet to the entire enterprise software industry. If AI agents can perform tasks that previously required dedicated SaaS applications, the traditional software licensing model faces disruption. Why pay per-seat fees for a project management tool when an AI agent can coordinate workflows directly? Why subscribe to a specialized analytics platform when an agent can query your data warehouse and generate reports on demand?

The early results are striking. According to OpenAI, a major manufacturer using Frontier agents reduced production optimization work from six weeks to one day. A global investment company deployed agents across its sales process, freeing up over 90% of salespeople's time to spend with actual customers rather than managing data and generating reports.

Fortune reported that Frontier "could reshape enterprise software" by collapsing the distinction between tools and coworkers. The platform doesn't just automate specific tasks; it creates flexible agents that can learn new responsibilities as business needs change. That adaptability is what makes traditional software companies nervous, because their products are built for specific use cases while agents can evolve.

Salesforce, which has invested heavily in its own AI agent capabilities, now faces a competitor that controls the most popular consumer AI product on the planet. Microsoft, OpenAI's primary investor, occupies an unusual position: its Azure cloud services power much of OpenAI's infrastructure, but Frontier also competes with Microsoft's own Copilot enterprise offerings. That tension will likely intensify as both companies chase the same corporate budgets.

The Competition Is Already Crowded

OpenAI isn't the first company to build an AI agent management platform, and it won't be the last. Anthropic has been quietly growing its enterprise presence, with annual recurring revenue reportedly approaching $7 billion and 2026 targets of $15 billion. Google's Gemini platform includes agent capabilities that leverage the company's massive data infrastructure. Startups like Dust, CrewAI, and LangChain have been building agent orchestration tools for over a year.

What OpenAI brings to this crowded field is scale and brand recognition. ChatGPT's hundreds of millions of users create a pipeline of potential enterprise customers who already trust OpenAI's technology. The company is also betting that its model quality advantage, reinforced by GPT-5.3-Codex, will make Frontier agents more capable than competitors' alternatives.

Altman framed the competitive landscape in characteristically expansive terms: "The companies that succeed in the future are going to make very heavy use of AI. People will manage teams of agents to do very complex things." That vision, where human managers oversee squads of AI workers, represents a fundamental shift in how businesses operate. Whether Frontier delivers on that promise will depend on execution over the coming months as the platform expands beyond its initial limited rollout.

The timing is strategic. Gartner recently forecast that worldwide AI spending will reach $2.52 trillion in 2026, a 44% increase year-over-year. Enterprise infrastructure represents the largest category of that spending. OpenAI wants to ensure that a significant chunk of those dollars flows through Frontier rather than to competitors or in-house solutions.

What Happens Next

Frontier is currently available only to a limited group of enterprise customers, with broader availability planned for the coming months. The phased rollout gives OpenAI time to refine the platform based on real-world feedback before opening it to the wider market. Given the company's track record with ChatGPT and its API products, the expansion will likely be aggressive once initial kinks are worked out.

For enterprise IT leaders, the key question isn't whether to experiment with AI agents, it's which platform to bet on. Frontier's interoperability with non-OpenAI models reduces the lock-in risk that typically accompanies platform commitments. But any company that builds its agent infrastructure on Frontier is making a strategic choice that will be difficult to reverse.

The broader implications for the workforce are worth monitoring. If Frontier agents can genuinely perform tasks that previously required human workers, the productivity gains could be substantial, and so could the displacement effects. OpenAI has consistently framed AI agents as augmenting rather than replacing workers, but the manufacturer case study that compressed six weeks of work into one day tells a more complicated story.

OpenAI's move from a research lab that happened to have a popular chatbot into a full-fledged enterprise platform company is now complete. Frontier isn't an experiment; it's a business that OpenAI expects to generate significant revenue as it chases its reported $30 billion target for 2026. Whether enterprises are ready to trust AI agents with real work, and whether those agents can deliver consistently, will determine if Frontier lives up to the ambition baked into its name.

Sources

- Introducing OpenAI Frontier - OpenAI

- OpenAI launches a way for enterprises to build and manage AI agents - TechCrunch

- OpenAI launches new enterprise platform in bid to win more business customers - CNBC

- OpenAI introduces Frontier agent management platform and new GPT-5.3-Codex model - SiliconANGLE

- OpenAI launches Frontier, an AI agent platform that could reshape enterprise software - Fortune