To put $68.13 billion in a single quarter into perspective, that's more than Starbucks makes in an entire year. It's roughly what Nike and FedEx generate annually, combined. NVIDIA posted that number in 90 days, and it still beat Wall Street's expectations by nearly $2 billion.

The chipmaker reported its fiscal Q4 2026 results on Wednesday, and the numbers confirmed what bulls have been arguing and skeptics have been doubting: the AI infrastructure spending cycle isn't cooling off. Total revenue climbed 73% year-over-year from $39.3 billion, driven almost entirely by data center demand that shows no sign of peaking. For a company that was worth roughly $300 billion in early 2023, before ChatGPT turned the world upside down, the scale of the transformation is staggering. NVIDIA now sits above $3 trillion in market capitalization, and these earnings explain why.

But the headline number only tells part of the story. What's happening inside NVIDIA's revenue mix, what CEO Jensen Huang said about where things go from here, and the policy environment surrounding AI power consumption all paint a more complicated and more interesting picture than "company beats estimates again."

The Numbers That Matter

NVIDIA's Q4 revenue of $68.13 billion topped the consensus estimate of $66.21 billion by about $1.9 billion. That might sound like a routine beat, but context matters. Guidance heading into the quarter was approximately $65 billion, meaning NVIDIA outperformed its own projections by roughly $3 billion. In the chip business, where production timelines and supply constraints make revenue highly predictable, a $3 billion upside surprise signals that demand is running ahead of even management's expectations.

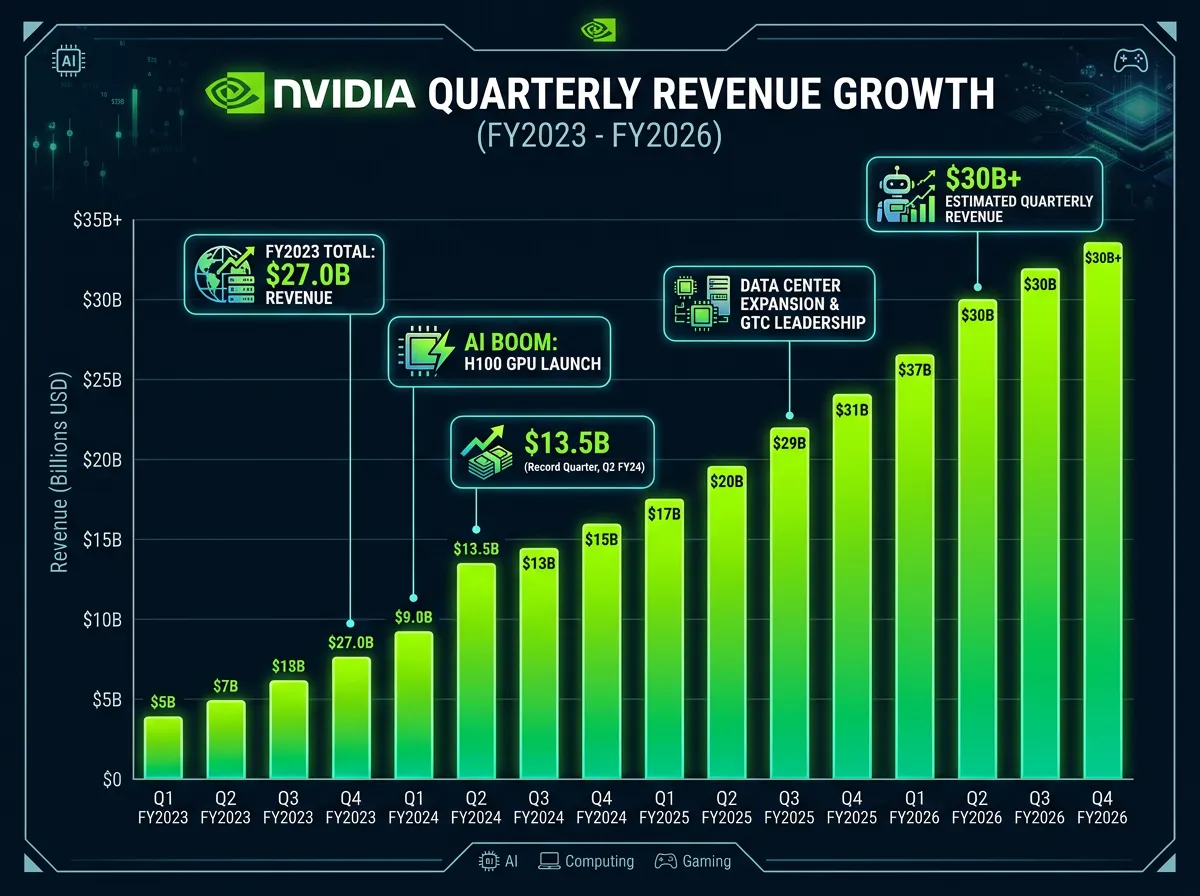

The full fiscal year 2026 totaled $215.9 billion in revenue, up 65% from the prior year. To frame that number historically: NVIDIA's entire annual revenue in fiscal year 2023 was $27 billion. The company has grown nearly eightfold in three years. There isn't a precedent for this kind of revenue acceleration at this scale in the semiconductor industry, and probably not in the broader tech sector either.

The forward guidance may be the most telling data point of all. NVIDIA projected Q1 fiscal year 2027 revenue of approximately $78 billion, plus or minus 2%. That implies the company expects sequential growth of roughly $10 billion in a single quarter. Wall Street had been modeling somewhere in the $75 billion range, so even the guidance beat estimates. When a company is already posting $68 billion quarters and still accelerating, the "overheating" narrative gets harder to sustain.

Data Centers Are the Whole Story Now

The most striking detail in the earnings report isn't the top-line number. It's the composition. Data center revenue hit $62.3 billion for the quarter, ahead of the $60.69 billion analysts had expected. That means data centers accounted for over 91% of NVIDIA's total sales. Three years ago, gaming was still a meaningful revenue driver. Today, NVIDIA is functionally a data center company that happens to also make gaming GPUs.

This concentration tells you exactly who NVIDIA's real customers are. Microsoft, Google, Amazon, Meta, Oracle, and a growing list of sovereign AI projects are building out GPU clusters at a pace that dwarfs anything the cloud computing buildout required. Each new large language model, each multimodal AI system, each enterprise AI deployment requires more compute than the last. And NVIDIA's GPUs, particularly its H100 and H200 chips, remain the default choice for AI training and increasingly for inference workloads too.

Jensen Huang addressed this dynamic directly on the earnings call, discussing the upcoming Rubin GPU architecture that will succeed the current Blackwell generation. The Rubin ramp is significant because it signals NVIDIA's confidence that demand won't just persist but grow. You don't commit billions in R&D and manufacturing capacity to a next-generation architecture if you think the market is about to plateau. Huang has consistently argued that the world is in the early innings of a multi-decade AI infrastructure buildout, and NVIDIA is making capital allocation decisions that back up that thesis.

The data center dominance also creates a concentration risk that bears watching. When 91% of your revenue comes from a single end market, any slowdown in that market hits the entire business. So far, the hyperscalers show no signs of pulling back. Gartner recently projected that global AI spending will reach $2.52 trillion this year, and a substantial chunk of that flows directly to NVIDIA. But the dependency is real, and it's the primary vulnerability that skeptics cite.

The "Is AI Overheating?" Debate

NVIDIA's earnings landed in the middle of a broader investor argument about whether the AI trade has gotten ahead of itself. The concern isn't that AI is fake or that the technology doesn't work. It's that the amount of capital pouring into AI infrastructure may be outpacing the revenue that AI applications actually generate for the companies doing the spending.

The math behind this concern is straightforward. Microsoft, Google, Amazon, and Meta collectively plan to spend well over $200 billion on capital expenditures this year, much of it on AI infrastructure. Those companies need to see returns on that investment in the form of higher cloud revenue, better ad targeting, new product categories, or productivity gains that justify the outlay. If the returns don't materialize at scale, the spending cycle slows, and NVIDIA's growth rate comes back to earth.

The emergence of efficient competitors like DeepSeek has added another dimension to this debate. If Chinese labs can train competitive models at a fraction of the cost, does that undermine the argument for ever-larger GPU clusters? The counterargument, which Huang has made repeatedly, is that efficiency gains increase demand rather than reduce it. Cheaper inference means more applications become economically viable, which drives more inference volume, which requires more GPUs. It's the same dynamic that played out with cloud computing: lower costs didn't reduce spending, they expanded the market.

NVIDIA's Q4 numbers lend weight to the bull case. If spending were about to slow, you'd expect to see it in order patterns, delivery timelines, or forward guidance. Instead, NVIDIA beat on every metric and guided higher than the street expected. That doesn't prove the boom will last forever, but it does suggest that the current quarter isn't the inflection point bears have been predicting.

The Power Problem No One Can Ignore

One development that didn't get enough attention alongside NVIDIA's earnings was the White House announcement, made the same week, of the "Rate Payer Protection Pledge." The initiative requires tech companies to provide their own power for AI data centers rather than drawing from existing utility grids. It's a direct response to the energy demands that GPU-dense facilities are placing on local power infrastructure.

This policy shift matters for NVIDIA's long-term trajectory. Every GPU sold needs electricity to run, and the power consumption of modern AI data centers is staggering. A single large-scale training cluster can draw as much power as a small city. Utilities in Virginia, Texas, and other data center hubs have already flagged capacity constraints, and the chip tariff and trade policy environment adds another layer of complexity to the supply chain.

Stacy Rasgon, a semiconductor analyst at Bernstein, noted that power constraints could become "the most meaningful bottleneck for AI infrastructure scaling over the next three to five years." If data center operators can't secure enough power, it doesn't matter how many GPUs NVIDIA can ship. The ceiling on AI infrastructure growth could end up being set by power grids, not chip fabs. NVIDIA's hardware is only useful if you can plug it in.

The Rubin architecture, which Huang discussed on the earnings call, is expected to deliver meaningful improvements in performance per watt. That's not accidental. NVIDIA knows that energy efficiency is becoming a competitive differentiator, not just a nice-to-have. The company that can deliver the most AI compute per kilowatt of electricity will have a structural advantage as power constraints tighten.

From $300 Billion to $3 Trillion: Anatomy of a Transformation

NVIDIA's current position deserves a step back for historical context, because the speed of this transformation has no real analog in the semiconductor business. In January 2023, NVIDIA was a $300 billion company. It made good GPUs for gaming and had a growing but unproven data center business. The company's annual revenue that year was $27 billion. A decent business, but not one that suggested world-changing scale.

Then ChatGPT happened, and the entire AI infrastructure stack rearranged itself around NVIDIA's hardware. Within 18 months, the company's market cap had multiplied tenfold. Revenue grew from $27 billion to $60 billion to $130 billion to now $216 billion in consecutive fiscal years. The stock went from $150 to over $1,000 (adjusted for the 10-for-1 split in mid-2024). No company in the history of the S&P 500 has ever scaled this fast at this size.

What makes this different from previous tech booms, and this is where the original analysis gets interesting, is the nature of the demand curve. During the dot-com bubble, companies were buying infrastructure for projected users that never materialized. During the crypto mining boom, GPU demand was tied to speculative token prices that could (and did) collapse overnight. The current AI spending cycle is driven by the largest, most profitable companies on earth making strategic bets with their own cash flows. Microsoft isn't financing GPU purchases with venture capital money; it's spending operating profits. That doesn't make the spending immune to correction, but it does mean the funding source is far more durable than previous tech cycles.

The risk, of course, is that NVIDIA has become so central to the AI infrastructure story that any disruption, whether from competition, regulation, supply chain issues, or a slowdown in hyperscaler spending, would have outsized market impact. Lisa Su's AMD is pushing hard with its MI300X and MI400 accelerators. Google's TPUs handle an increasing share of internal AI workloads. Amazon's Trainium chips are gaining traction in AWS. And the Chinese market, where export restrictions limit NVIDIA's highest-end products, is developing domestic alternatives. NVIDIA's dominance is real, but it's being challenged from multiple directions simultaneously.

The Verdict

NVIDIA's $68 billion quarter answered one question definitively: the AI infrastructure spending cycle is still accelerating in early 2026. The company beat on revenue, beat on data center sales, beat on earnings, and guided meaningfully above expectations for the next quarter. If you were waiting for signs that the music was about to stop, this wasn't the report that delivered them.

But the bigger question, whether the aggregate AI investment cycle will generate returns sufficient to justify the trillions being spent, doesn't get answered by a single company's earnings report. NVIDIA can keep posting record quarters right up until the moment its customers decide the returns aren't there. That decision point doesn't appear imminent based on current spending patterns, but it's the tail risk that keeps bears engaged.

For now, NVIDIA's position is about as strong as any company's can be. It controls the picks and shovels in the biggest infrastructure buildout since the internet itself, and the $78 billion guidance for next quarter suggests that the customers buying those picks and shovels aren't planning to stop digging anytime soon. The power constraints, the competitive threats, and the return-on-investment questions are all real. But they're medium-term challenges for a company that, today, just printed one of the most dominant earnings reports in the history of the technology industry.

Sources

- NVIDIA Corporation Q4 Fiscal Year 2026 Earnings Report, filed February 2026

- Stacy Rasgon, Bernstein Research, semiconductor industry analysis on AI infrastructure power constraints, February 2026

- Gartner Inc., "Global AI Spending Forecast," February 2026

- Jensen Huang, NVIDIA CEO, Q4 FY2026 Earnings Call, remarks on Rubin architecture and AI infrastructure demand

- White House Office of Science and Technology Policy, "Rate Payer Protection Pledge" announcement, February 2026