Intel just made the most aggressive GPU hire in the company's 57-year history, and it's someone Nvidia and AMD know very well. Eric Demers, the engineer who designed some of AMD's most celebrated graphics architectures, including the legendary R300 and R600 chips, has joined Intel as Senior Vice President of GPU Architecture. CEO Lip-Bu Tan announced the move at the Cisco AI Summit, adding that it took "some persuasion" to land him.

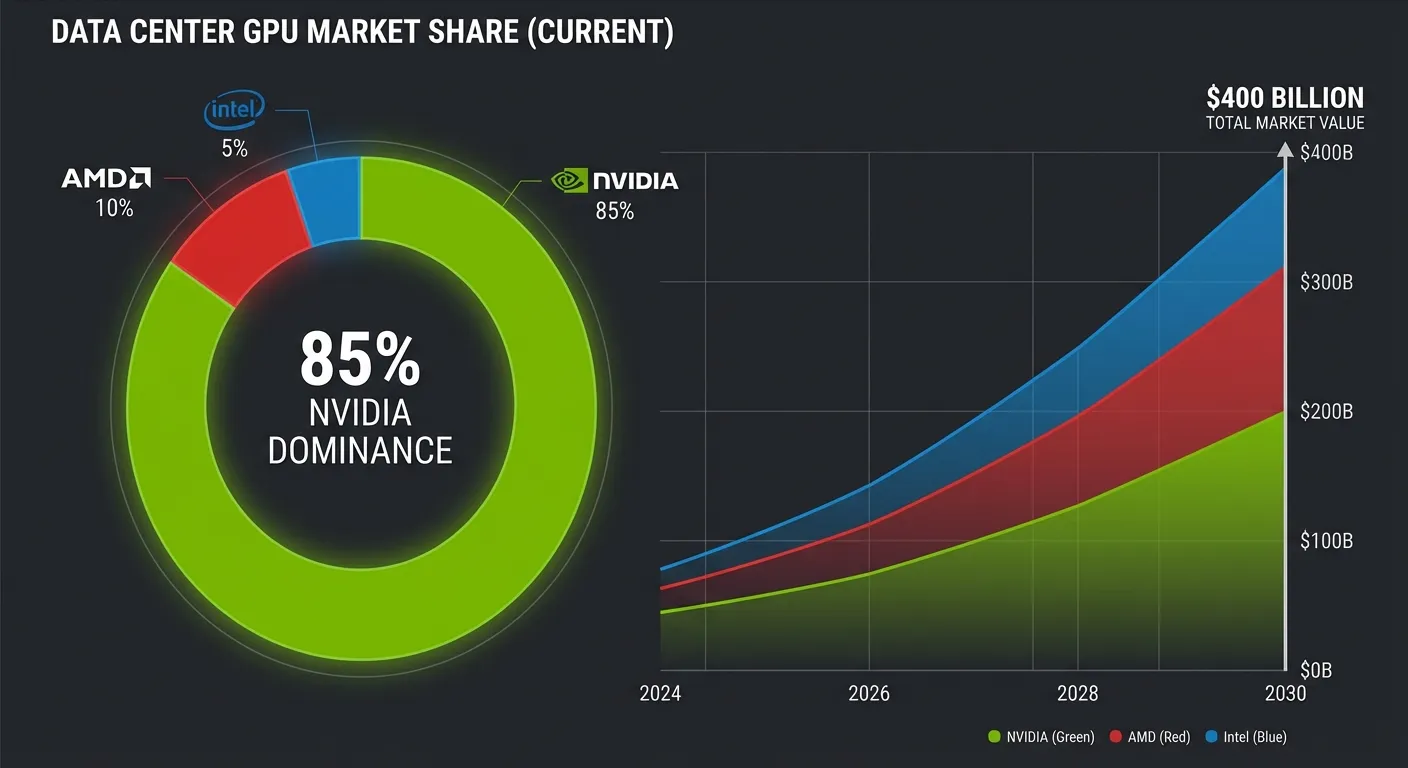

That's an understatement. Demers spent 14 years at Qualcomm leading the Adreno GPU team and before that served as CTO of AMD's graphics division following the ATI acquisition in 2006. He's one of a handful of engineers on the planet who has shipped successful GPU architectures at scale across multiple companies. Now Intel is betting he can do it again, this time in a market where Nvidia holds roughly 80% of the data center GPU market and has spent two decades building the software ecosystem that keeps customers locked in.

The Hire That Signals a Strategic Shift

This isn't Intel dabbling in graphics anymore. Under Lip-Bu Tan, who took the CEO role last March, the company has elevated GPU development from a side project into a core strategic pillar. Demers is the second major hire in this push, following former TSMC Senior Vice President Wei-Jen Lo, who joined last year to strengthen Intel's manufacturing capabilities.

The GPU initiative will operate under Kevork Kechichian, executive vice president and general manager of Intel's data center group, who was himself hired in September as part of a broader engineering talent offensive. Intel is building a GPU roadmap spanning consumer, data center, and AI segments based on its Xe, Xe2, and newly introduced Xe3 architectures. Recent product announcements include the Crescent Island data center GPU with 160GB of memory and the Jaguar Shores accelerator aimed at rack-scale AI solutions.

But here's the context that matters: Intel's track record with GPUs has been, to put it diplomatically, uneven. The company failed to hit a modest $500 million revenue target for its Gaudi AI accelerator chips in 2024. Its Arc consumer graphics cards were met with lukewarm reviews and driver issues at launch. And Sachin Katti, the executive appointed to lead Intel's newly independent AI Group, left in November to join OpenAI. The Demers hire is Intel's way of saying that the previous approach wasn't working, and that it's serious about starting over with the right leadership.

Why Nvidia Is Harder to Catch Than It Looks

If you're wondering why Intel can't just throw its massive R&D budget at the problem and close the gap, the answer is three letters: CUDA. Nvidia's proprietary software platform has spent more than 20 years becoming the default environment for AI researchers, machine learning engineers, and data scientists worldwide. It's not just a programming framework. It's an ecosystem of libraries, tools, pre-trained models, and institutional knowledge that represents billions of dollars in collective investment by the AI community.

Switching from CUDA to an alternative isn't like switching from one spreadsheet app to another. It means rewriting codebases, retraining teams, and revalidating results. Even when alternatives like AMD's ROCm or Intel's oneAPI offer competitive performance on paper, the switching costs keep most organizations locked into Nvidia's ecosystem. That's the moat, and it's wider than any hardware specification gap.

Nvidia also moves fast. While Intel has been reorganizing and hiring, Nvidia sealed a 50-year deal for its first overseas headquarters in Taipei and continues to dominate AI infrastructure spending. Gartner projects global AI spending will reach $2.52 trillion in 2026, and Nvidia is positioned to capture the lion's share of the hardware budget.

The Case for Why Intel Can Actually Compete

Despite all of that, there's a credible argument that the AI chip market is about to get a lot more competitive, and Intel's timing may be better than it looks.

First, the market is simply enormous and growing fast enough to support multiple winners. The global GPU market is projected to reach $400 billion by 2030, driven primarily by AI adoption across enterprises. No single company, not even Nvidia, can manufacture enough chips to meet that demand. High-bandwidth memory, or HBM, has already become the scarcest component in the AI supply chain, creating bottlenecks that open doors for alternative architectures.

Second, Intel brings something neither AMD nor any startup challenger can match: a captive manufacturing operation. Intel Foundry Services gives the company the ability to produce its own chips without depending on TSMC, the Taiwanese manufacturer that fabricates Nvidia's GPUs. In a world where chip tariffs and geopolitical tensions are reshaping supply chains, vertical integration is a genuine strategic advantage.

Third, enterprise customers are actively looking for alternatives. Cloud providers like Amazon, Google, and Microsoft have all developed custom AI chips in part because Nvidia's pricing power makes single-supplier dependency a financial risk. An Intel GPU that offers 80% of Nvidia's performance at 60% of the price, bundled with an x86 server ecosystem that IT departments already know, could find a massive market without ever touching Nvidia's high-end dominance.

What Demers Brings to the Table

Eric Demers isn't just any GPU engineer. At ATI (later AMD), he architected the R300, the chip that broke Nvidia's stranglehold on the high-end graphics market in 2002 and proved that a determined underdog could compete with the dominant player on pure engineering merit. The R600 followed as another landmark design. At Qualcomm, he led the Adreno team that powers GPU performance across the vast majority of Android smartphones, handling everything from mobile gaming to on-device AI inference.

What makes Demers particularly dangerous for Nvidia is his track record of building competitive architectures at companies that were supposed to lose. AMD was a distant second in GPUs when Demers helped turn the division around. Qualcomm had no GPU business at all before his team built one from scratch. Intel's GPU division has more resources, more manufacturing capability, and more enterprise relationships than either of those companies had when Demers joined them.

The question isn't whether Demers can design a good GPU. He's proven that repeatedly. The question is whether Intel can build the software ecosystem, developer tools, and customer relationships to make that hardware matter in a market where CUDA is still king.

What This Really Means

Intel's GPU push under Demers is less about beating Nvidia next year and more about positioning for a market that's too big and too strategically important for any single company to own forever. If AI infrastructure spending continues on its current trajectory, the demand for compute will outstrip any single supplier's capacity by 2028 or 2029.

Lip-Bu Tan is betting that by the time that supply crunch hits, Intel will have a credible GPU architecture, a competitive software stack, and the manufacturing independence to offer something no one else can: an end-to-end AI compute solution from a single American company that doesn't depend on Taiwanese fabs.

It's the biggest bet Intel has made since Tan took over. And with Demers at the drawing board, it's the first time in years that bet looks like more than wishful thinking.

Sources

- Intel will start making GPUs, a market dominated by Nvidia - TechCrunch, February 3, 2026

- Intel CEO Tan Announces GPUs to Rival Nvidia - Dataconomy, February 4, 2026

- Intel Recruits Qualcomm and AMD GPU Veteran Eric Demers to Advance AI Push - TrendForce, January 20, 2026

- Intel Confirms GPU Chief Architect Hire to Challenge Nvidia - WinBuzzer, February 4, 2026