On Monday, a group of hackers uploaded two malicious versions of LiteLLM, the open-source AI gateway that sits between applications and large language models at companies like Stripe, Netflix, and Adobe, to the Python Package Index. For roughly three hours before PyPI quarantined the packages, anyone who installed version 1.82.7 or 1.82.8 had their API keys, cloud credentials, SSH keys, and database passwords silently harvested and exfiltrated to a server controlled by the attackers. According to Wiz, LiteLLM is present in 36% of cloud environments. Mandiant CTO Charles Carmakal told reporters the compromise has already impacted over 1,000 SaaS environments, with projections reaching 5,000 to 10,000.

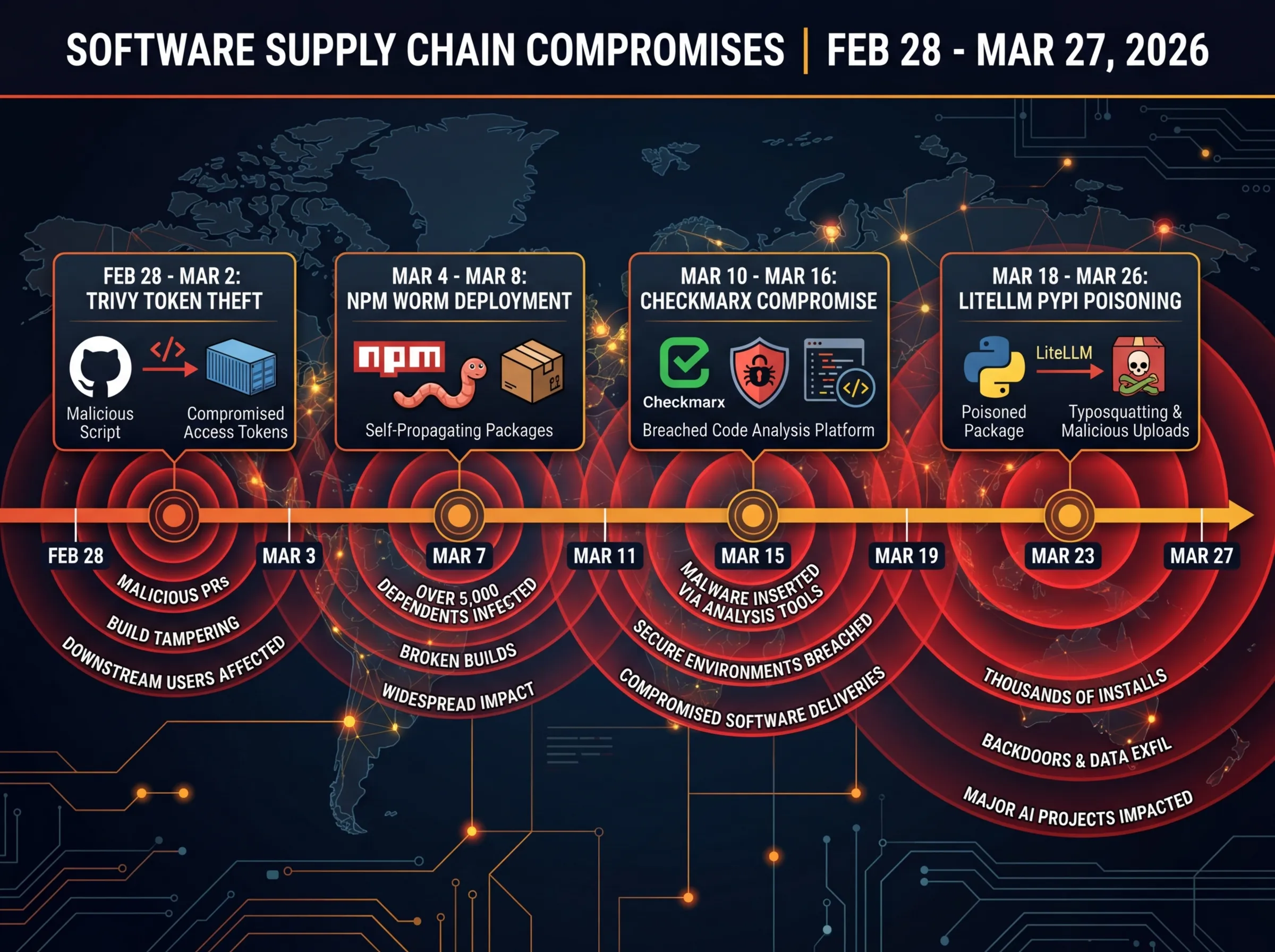

The attack is the latest phase in a sprawling campaign by a threat actor called TeamPCP that has compromised security tools, code editors, and AI infrastructure across five software ecosystems in under a month. It is also one of the clearest demonstrations yet that the AI boom has created a massive, largely undefended attack surface in the software supply chain.

How Three Hours of Poisoned Code Did This Much Damage

LiteLLM, built by YC-backed startup BerriAI, lets developers call over 100 different AI APIs (OpenAI, Anthropic, Azure, Google Vertex AI, AWS Bedrock, and others) through a single interface. It handles cost tracking, load balancing, and logging. The tool averages 3.4 million daily downloads and has over 41,000 GitHub stars. In practical terms, it touches the API keys for nearly every AI service a company uses.

The first malicious version, 1.82.7, embedded an obfuscated payload in the proxy server module. When developers ran `litellm --proxy` or imported the proxy server, the payload activated, collected every credential it could find, encrypted the haul with AES-256 and a hardcoded RSA public key, and shipped it to a command-and-control server at `models.litellm.cloud`.

Version 1.82.8, uploaded 13 minutes later, was far worse. It included everything from 1.82.7 plus a 34-kilobyte `.pth` file planted in Python's site-packages directory. Python's interpreter automatically executes `.pth` files on startup, which meant the credential-stealing payload ran on every Python process on the affected machine, not just those using LiteLLM. Running `pip install` for an unrelated package would trigger it. If the malware detected Kubernetes tokens, it attempted to read cluster secrets, spin up privileged pods, and install a persistent backdoor that phoned home every 50 minutes for further instructions.

Ironically, a bug in the malware helped expose it. The `.pth` file spawned child processes that triggered the same `.pth` file during initialization, creating an exponential fork bomb that crashed affected systems. Callum McMahon, a research scientist at FutureSearch, noticed his machine had been taken down after the malicious LiteLLM version was pulled in as a transitive dependency by an MCP plugin running inside Cursor, the AI code editor. FutureSearch reported the compromise directly to PyPI, which quarantined the packages roughly three hours after the initial upload.

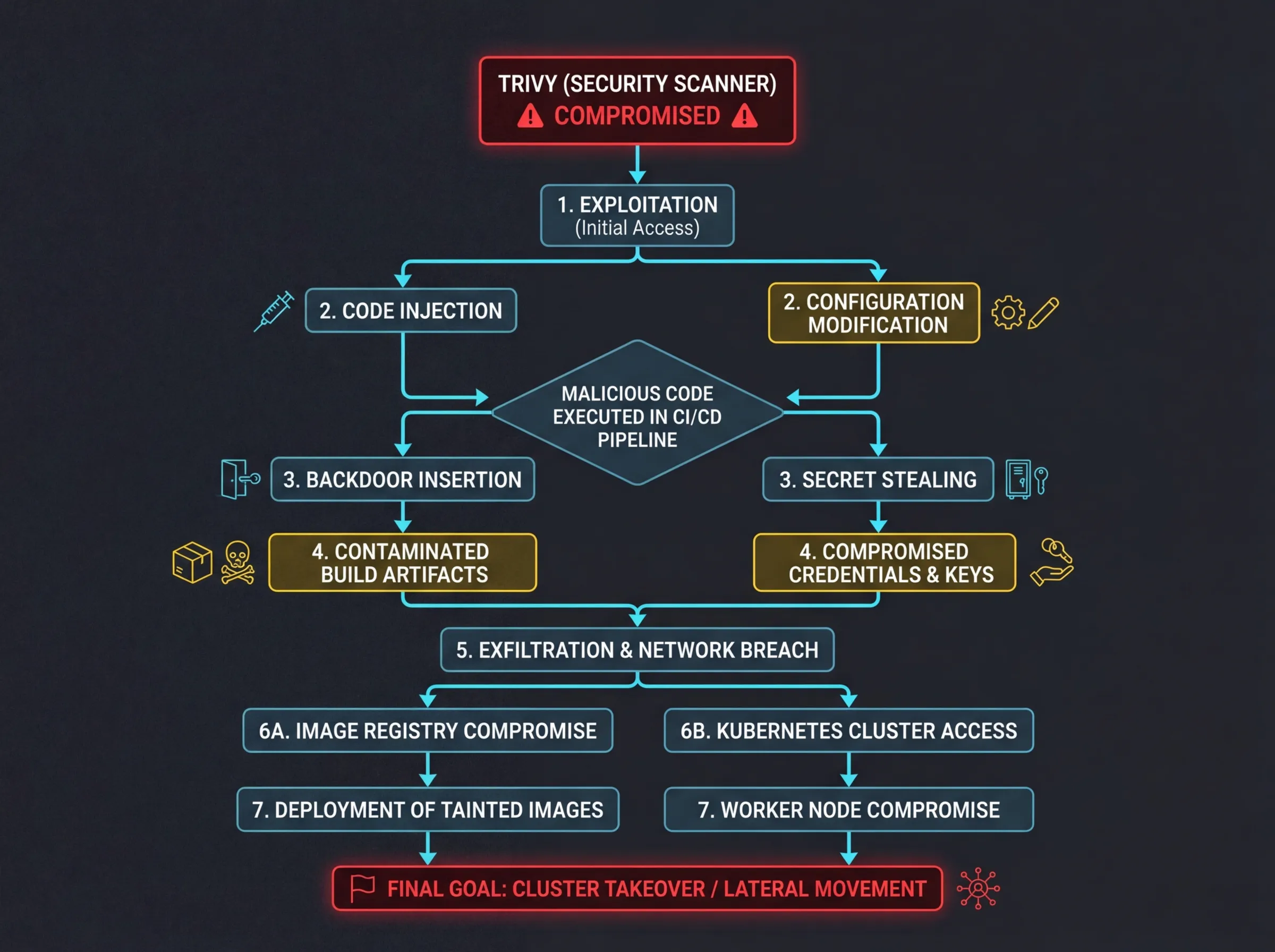

The Kill Chain: From Security Scanner to AI Gateway

TeamPCP did not breach LiteLLM directly. The group stole a privileged GitHub Actions token from Aqua Security's Trivy, a widely-used vulnerability scanner, on February 28. From that foothold, they force-pushed malicious commits to 76 of Trivy's 77 version tags and deployed a self-propagating worm called CanisterWorm across 44 npm packages. Stolen CI/CD secrets from that campaign then compromised Checkmarx's KICS GitHub Action. Because LiteLLM's CI/CD pipeline ran Trivy without a pinned version, the compromised scanner exfiltrated LiteLLM's PyPI publishing credentials from the GitHub Actions runner environment, giving the attackers legitimate access to publish packages.

The same 4,096-bit RSA public key appeared in payloads across Trivy, Checkmarx, and LiteLLM, confirming a single coordinated campaign. "The attackers may be sitting on many more compromises across the open-source ecosystem, waiting for guards to go down before launching the next," said Katie Paxton-Fear, a researcher at Semgrep. She noted that the timing appeared calculated: the LiteLLM attack landed during RSA Conference, when many security teams were distracted.

Who TeamPCP Is, and Why This Is Different

TeamPCP, also tracked as DeadCatx3 and ShellForce, rose to prominence in late 2025. The group exclusively targets security-adjacent tools: vulnerability scanners, infrastructure-as-code analyzers, and LLM proxies that handle API keys. They are the first threat actor documented to use Internet Computer Protocol canisters as dead-drop command-and-control infrastructure, a decentralized blockchain approach that is immune to traditional domain takedowns.

As Sonatype researchers put it: "LiteLLM sits between applications and AI providers, accessing API keys and environment variables. This positioning allows attackers to intercept secrets without breaching upstream systems." The group has reportedly pivoted from pure credential theft to active extortion, managing approximately 300 gigabytes of stolen credentials, and has been linked to collaboration with LAPSUS$, the extortion group behind previous attacks on Nvidia, Samsung, and Microsoft.

The downstream blast radius extends well beyond companies that use LiteLLM directly. Over 600 public GitHub projects had unpinned LiteLLM dependencies. Affected downstream projects include DSPy, CrewAI, MLflow, OpenHands, and several others, all of which filed emergency pull requests to pin away from the compromised versions.

What Companies Should Do Right Now

LiteLLM has paused all new releases, engaged Google's Mandiant for forensic analysis, and rotated all maintainer credentials. For companies that may have been exposed, the response checklist is straightforward but urgent:

- Rotate all secrets on any system that installed v1.82.7 or v1.82.8: API keys, cloud access keys, database passwords, SSH keys, Kubernetes tokens, and environment variables

- Search filesystems for `litellm_init.pth` in site-packages, `~/.config/sysmon/sysmon.py`, and `/tmp/pglog`

- Audit Kubernetes clusters for unauthorized `node-setup-*` pods in the `kube-system` namespace

- Check network logs for connections to `models.litellm.cloud` and `checkmarx.zone`

- Pin all dependencies using cryptographic hashes and pin GitHub Actions to full commit SHAs, not mutable tags

Why It Matters

This attack is a case study in a problem the AI industry is struggling to address even as it scales at breakneck speed. According to Sonatype's 2026 report, malicious package uploads jumped 156% year-over-year, with 454,600 new malicious packages identified in 2025 alone. The cumulative total across major package registries now exceeds 1.2 million.

The AI ecosystem is particularly vulnerable because tools like LiteLLM are middleware: they sit at the intersection of every service a company uses, holding the keys to all of them. As Cory Michal, CISO at AppOmni, explained: developer workflows "automatically pull in third-party code from the internet with limited review." In the rush to integrate AI across business operations, security hygiene around AI tooling has lagged behind adoption.

Dan Lorenc, CEO of Chainguard, called the design of GitHub Actions "irresponsible today," identifying six fundamental design failures that enabled the campaign. His point applies beyond Actions: the mutable tags, unpinned dependencies, and implicit trust in CI/CD pipelines that made this attack possible are standard practice across most of the open-source AI stack.

Three hours. That is how long the malicious packages were live on PyPI. In that window, TeamPCP potentially gained access to the cloud credentials and API keys of thousands of organizations. The AI supply chain is only as strong as its weakest dependency, and right now, most companies do not know which dependencies they are trusting with their most sensitive secrets.

Sources

- LiteLLM Official Security Update - BerriAI's advisory from co-founders Krrish Dholakia and Ishaan Jaff

- Wiz Security Analysis - Technical analysis including 36% cloud environment presence statistic

- Kaspersky Blog: TeamPCP Campaign Analysis - Overview of the full campaign chain

- FutureSearch Discovery Report - First-hand account from the team that discovered the compromise

- CSO Online: Mandiant Impact Estimates - Charles Carmakal's SaaS environment impact projections